I mean, sure…

But knowing it’s entertainment brings on a whole other set of ethical issues.

C’mon Wim, who among us hasn’t destroyed democracy, brain washed the vulnerable, and put minorities of all kind in grave danger, in the name of ratings.

To be honest, I don’t see this as surprising. The Republicans see abortion as being illegal and punishable by the state. Why on earth would they allow the blocking of this data being searchable?

And in states where abortion isn’t illegal, I’m not sure there is a good argument why this data shouldn’t be available from a search warrant, just as plenty of other personal information is if the police can convince a judge they have probable cause.

Personally, I can see a good argument that although people putting information up on the internet isn’t necessarily the platforms abiding and abetting, algorithms are a platform’s version of speech and they should be held accountable for them.

Since its enactment in 1996, Section 230 has been interpreted by courts to shield online platforms from liability for almost any offense committed by users. This protection for user behavior enabled the growth of the current online ecosystem of search engines, social media sites, blogs, message boards, user-generated encyclopedias and shopping sites. And so it has variously been dubbed the internet’s “Magna Carta,” its “First Amendment” and the “Twenty-Six Words That Created The Internet.”

But Section 230 has also produced negative effects. Online platforms have been used for harassment, death threats, defamation, discrimination, revenge ■■■■, fraudulent product sales, the illegal purchase of weapons and drugs resulting in death, and other illicit behavior. In most cases, platforms have been absolved of any responsibility thanks to Section 230’s protections. Gonzalez v. Google brings the negative effects that come with Section 230 before the court for the first time.

In 2015, Islamic State-linked militants murdered 23-year old American student Nohemi Gonzalez amid a terrorist attack in Paris that left 129 people dead. Gonzalez’s family sued Google for aiding and abetting terrorism under the Anti-Terrorism Act. The family claimed that YouTube’s algorithmic recommendation engine suggested and promoted videos posted by the Islamic State that recruited followers and encouraged violence. At issue in the case is whether or not these algorithms are themselves covered by Section 230’s liability protection.

This one is also interesting in that there is support from across the political landscape on both sides of the issue.

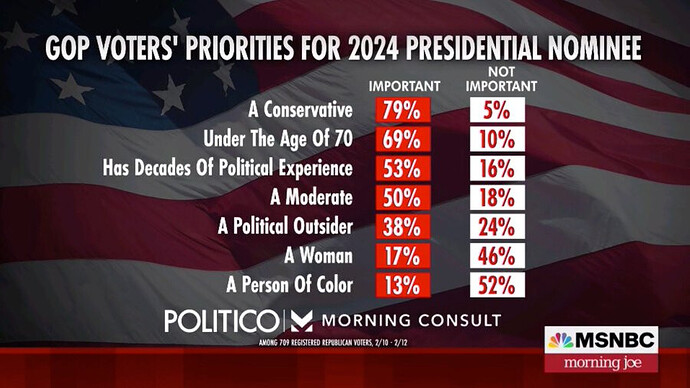

Hopefully indicates that Trump will find it more challenging than expected to get the nomination.

Very dangerous precedent and hopefully the case fails.

I don’t buy it.

I don’t also buy 50% wanting a moderate either. Although what a Republican considers a “moderate” probably is someone who we’d consider a raging right winger …

Moderate is possibly ‘doesn’t want to storm the capitol and forcibly seize power’.

129% want a conservative or a moderate.

Anyway, Nikki Haley is doomed, but I guess it didn’t need a poll to tell us that.

Yep. Like they see Cheney as a moderate though she was more deeply and reliably conservative than any of them, particularly the radical MAGA wing, but she wasn’t going to back Jan 6.

I’m guessing she’s just Trumps stalking horse. Candidates are very hesitant to nominate for fear of Trump but having Haley out there will flush them out otherwise she just gets all the press. He will then get to work trying to destroy them (eg Desantis)who wants to hold off as long as possible so hopefully trump doesn’t run.

If he wins she will be his foreign secretary.

And that’s just a conservative estimate.

Why do you say that? I’ve got to admit, I think I lean the other way although I haven’t looked at the issue closely. But I tend to think that as soon as the website is making its own decisions over what material is provided, then it also has skin in the game and responsibility.

I’m sure Dems have some weak members at the state level. But this lady is in charge of the Michigan GOP!

Karamo has moved on — defeating former attorney general candidate Matt DePerno to become the next GOP state chair. Interestingly, Trump had endorsed DePerno for the job, despite his nod to Karamo in the past.

Here’s the Washington Post’s coverage on Karamo’s rhetoric, especially her belief in demons.

Karamo argued that Christianity belonged at the core of American politics, called evolution “one of the biggest frauds ever perpetuated on society,” and asserted the existence of demons.

“When we start talking about the spiritual reality of the demonic forces, it’s like, ‘Oh, my God, this is crazy, we can’t go there,’” Karamo said. “No. It’s like, did you read the Bible? Didn’t Jesus perform exorcisms? … Scriptures are clear. And so if we’re not operating as though the spirit realities of the world exist, we’re going to fail every time.”

Karamo’s beliefs were also catalogued by CNN’s KFile team, which noted her belief that abortion is a “satanic practice” that, according to her, essentially boils down to “child sacrifice.” Karamo has affiliated with QAnon in the past, plus she once claimed on her podcast that demonic possession is real, and can be passed on through “intimate relationships.”

“If a person has demonic possession — I know it’s gonna sound really crazy to me saying that for some people, thinking like what?!” said Karamo. “But having intimate relationships with people who are demonically possessed or oppressed — I strongly believe that a person opens themselves up to possession. Demonic possession is real.”

I admit, I’ve not really followed the border issue. My vague perception was that Trump had stuffed things, and Biden hadn’t really done much to fix it beyond (maybe) getting kids out of cages. If he’s actually getting things fixed that would be very very good. Not that the Republicans will likely appreciate the method used.

Biden announced that immigrants with U.S. sponsors from four major origin countries could apply to come legally to the United States on a status called humanitarian parole. And according to the January immigration figures released late last week, Biden’s plan is already working.

In December 2022, the last full month before humanitarian parole, Border Patrol had 84,176 encounters with migrants from those four countries which accounted for over 36 percent of all encounters that month at the U.S.-Mexico border. In January, the number had already dropped to 11,909—an 86 percent decline. As a result, overall U.S.-Mexico border encounters are down 42 percent.

Firstly, it’s almost impossible to comply with. The resources required by web hosting companies to compy with the requirement (and to be able to afford the cost of any breach) would be so significant that only a small number of companies could provide such services. That would be bad for consumers.

Secondly, it would be very difficult to police. The resources required to police breaches would be significant and I believe the cost would significantly outweigh the benefit.

Thirdly, censorship doesn’t work. History shows that you cannot control what people think and discuss. All attempts to do so inevitably fail.

Of course, that’s not to say that there shouldn’t be limits on freedom of speech (information/data which is evidence of the commission of a crime, for example). ISPs should not be responsible for policing those things though, which is what the case is about; that should be the State’s/cops’ job.

Firstly, it’s almost impossible to comply with. The resources required by web hosting companies to compy with the requirement (and to be able to afford the cost of any breach) would be so significant that only a small number of companies could provide such services. That would be bad for consumers.

Secondly, it would be very difficult to police. The resources required to police breaches would be significant and I believe the cost would significantly outweigh the benefit.

I disagree with this. Obviously requiring any major tech/social media company to actively police everything on their platform would be an impossible problem of scale, but that’s not what the case is about. The case is very specifically targeting the promotional/recommendation algorithms that are written, owned, run, and under the complete control of the company, and which are in fact not at all required for its basic level of operation. There’s a world of difference between prosecuting youtube because someone sneaked a neonazi video up there among the zillions of hours of video that get uploaded each day, and prosecuting youtube because youtube’s algorithm decided to promote that video relentlessly and nobody at youtube thought this was a problem or bothered to do anything about it.

The way the youtube/facebook recommendation algorithm quickly spirals down into extremism is very well known and has been well known for years and years now. Seriously, go watch a few videos about marvel movies, and see how long it takes for your recommendation feed to be full of rabid misogyny aimed at the actresses from She-Hulk, Captain Marvel etc etc. And the whole facebook far-right and QAnon echo chamber, and the way facebook’s algorithm promotes it, is just as well documented.

Thirdly, censorship doesn’t work. History shows that you cannot control what people think and discuss. All attempts to do so inevitably fail.

The intent isn’t to stop this material being thought about, or even posted. The intent is to discourage social media companies from actively promoting and cashing in on its spread. A few hundred isolated neonazis sitting in their bedrooms stewing over Mein Kampf, afraid to talk about their beliefs in public for fear of ostracism, are not so much of a problem. A few hundred neonazis on the same website, acting in concert, able to run coordinated recruitment drives and sock puppet farms and stalking/harrassment campaigns etc etc etc despite living nowhere near each other- that’s a movement that can quickly become a few thousand neonazis, or a riot, or a terrorist organisation. Of course ‘intent’ is a claim that covers a multitude of sins when it comes to giving the state more power over private speech, but I do see the problem. The recommendation algorithm (and the way it hasn’t been fixed despite operating this way for over half a decade) means that the social media companies have stepped over the line from merely hosting this stuff, to promoting it.

Of course, that’s not to say that there shouldn’t be limits on freedom of speech (information/data which is evidence of the commission of a crime, for example). ISPs should not be responsible for policing those things though, which is what the case is about; that should be the State’s/cops’ job.

If I owned a billboard hire company and I sold advertising space to ISIS who used it to post recruitment ads for suicide bombers and promote terrorism and murder, then I suspect I’d certainly have trouble with the law. This is exactly what the big social media companies are doing, but because it’s on the internet they’re hiding behind a loophole that makes it legal.