Trying to be a bit quicker with this reply, it's too nice a day to spend inside writing this stuff.

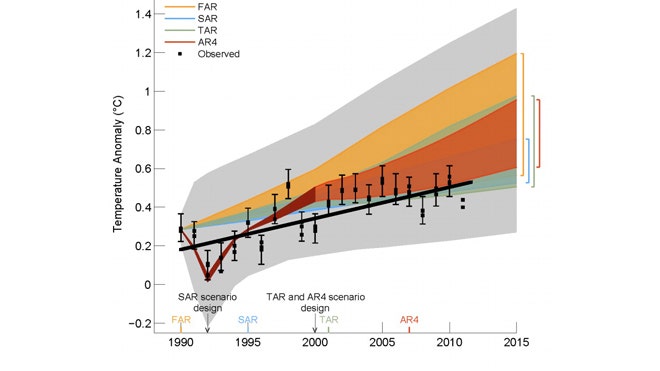

IPCC has slightly overestimated atmospheric temperature increase over the past 20 years, but over the same period they've underestimated oceanic temperature increases significantly. Which is why we're seeing Arctic sea ice melt much faster than we expected. Overall amount of heat increase (sum of oceanic/atmospheric) has been pretty close to as predicted. This is not a debate about whether warming his happening, it's about the outcomes of it.

With regards to regional results - you can average the output of model runs regionally as well as globally. If you run X model iterations and 80% of them who simulate increasing temperatures in southern Australia for instance, while 20% have that area remaining around the same and none which simulate cooling temperatiures, there, then you can make a prediction from that. The output of a climate model includes all its parameter data (temp, wind, cloud cover, etc) for all of its cells - this can all be numbercrunched and analysed many different ways, from grouping blocks regionally to comparing the % of total times winds reach over a given threshold and result in hurricane conditions etc.

Generally, in the climate modelling field, the way models are calibrated is that the parameters are tweaked so that the output matches as closely as possible one set of historical data, while one set is purposely ignored during model development so it can be used for verification afterwards. So maybe you develop your model using historical cloud cover, atmospheric temperature, oceanic temperature, ice coverage etc data, then run your model and see how well it matches deep ocean current data - if it's way out, then you've stuffed up.

Even so, as you say, models are not perfect. Nobody argues that they are. But in the absence of a future-predicting machine, they're the only thing we have - frankly, if models of complex situations in general were useless, nobody would rely on them in any field, yours included.

And to be honest, right now the modellers aren't interested in working out whether CO2 is causing the atmosphere to heat. They don't need to. We have really basic data and knowledge that effectively proves that.

1) we know that increaing CO2 % in air causes it to heat faster. We have a thorough understanding of why (IR absorption spectra etc).

2) we know that we are increasing CO2 % in the air. We know where it comes from and we have measured the increase

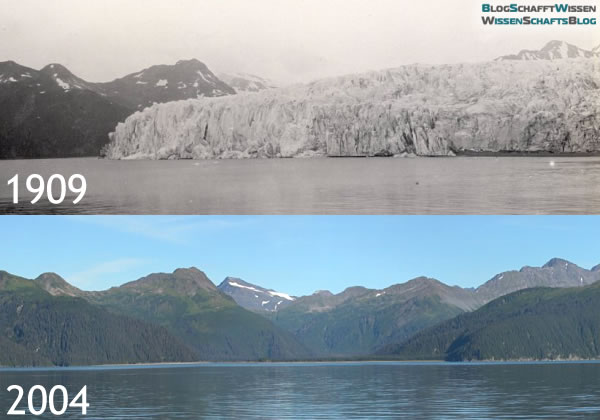

3) we know that the earth is heating, both though measurements at weather stations and through satellite measurements monitoring the amount of radiated CO2.

Once again, the burden of proof here is on the denialists. We have a very solid body of physics/chemistry that predicts outcome A should we perform action X, and we have an experiment (the earth) in which we are performing action X and in which we are observing outcome A A disproof at this point is going to have to be pretty special, and 'the models aren't perfect' isn't going to cut it.

What the modellers are trying to do now is model the expected course, speed, amount, and regional implications of warming under different CO2 emission regimes, because we need to know this stuff, both to know precisely how quickly we have to cut emissions and how far to keep warming below dangerous levels, and to help plan for adaptation, mitigation etc measures. And unfortunately here is where the imperfect models hurt science politically - if a model gets one thing wrong (and they all do, because they are necessarily imperfect attempts to simulate arguably the most complex system on earth) then it's forever pointed at as a proof that AGW isn't happening at all and used to discredit the entire body of climate science. Which is of course ridiculous.