hey BBB. I actually haven’t set the final teams for any games apart from the Giants - Bombers. Decided just to leave it until tonight. Both teams are fairly close I would say, but it will be interesting to see how much it deviates. I can re-run it now if you tell me who the Giants outs are?

Hey frosty - I basically did correlation assessment - standard variable importance - recursive feature elimination.

It did throw out variables that I had put considerable time into figuring out and coding, which was a little devastating. The end model is actually not the totally optimised one - I decided to keep the complete set of player metrics in there, and remove a couple of hard to explain ones. The overall result only varied by less than 1-2 tips per year, so I was willing to cop it.

also as much as I love Essendon, the bookies have GWS at 1.61 as well.

The picks of the pundits in the HS appear to be around 30 for GWS and 4 for Essendon.

JLT game impressions beat Preseason hype, I guess.

Backing the home team in rnd 1 is the smart play more often than not.

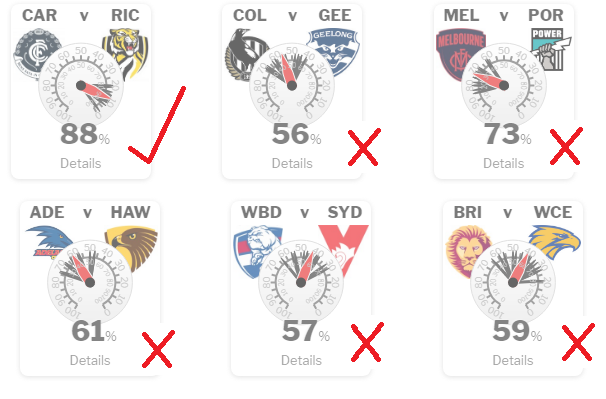

Lets look at the aggregated results so far. Massive fail of the models squigle base their predictions on.

Wow, looks exactly like my own predictions!

Coincidence? I think not!

R1 is always a crapshoot.

Plenty of side playing above themselves for a good season start, done slow starters due to deep finals last year and not too concerned about an early loss and some who are probably getting ahead of themselves. Let’s hope we are the first one.

Is there a comparison to how stat predictions go against humans?

Would be interesting to see.

Pretty sure you can see a distribution of “humans” on footy tips.com or similar. Then just draw the line where the system fits on that distribution. It won’t be better than all of them, but what do you consider a good result. 1, 2 standard deviations above the mean?

Disagree

Footytips.com has Collingwood and FarkCarlton supporters on it, skewing the distribution of “humans”

I did qualify it with the quotation marks

This is why margin prediction is a better indicator than winner takes it all approaches.

I’d say the models gave Brisbane and the Doggies a better chance than many punters would have. The only outright howler was the Dees vs Port, but given the Dees are probably the most hyped up team of 2019 I’d say most people got that one wrong as well.

Calm down, I think you will find the Punters, or “the crowd” also performed badly. A model is only failing when it is not predicting what other models are. There is no evidence of that so far.

Squiggle is actually winning, I am coming second last (bad football week all in all) but I am not concerned due to the previous comments. Massey Ratings won last year and is down there with me.

Focussing on players individually, the following players performed well in excess of their normal rating.

Taylor, Taranto,Hopper, DeBoer, Lloyd , Tomlinson, Keefe. Either we let them or they have improved up to 100% over the preseason.

Our ruckmen were awesome!!!

Pretty much confirms what we saw - all bar 3 players were well below their usual performance levels.

Saad the only standout from a Bombers point of view.

I don’t see how Guelfi was rated that high, I thought he was atrocious.

Ha, I thought Saad was sub-par (by his standards).